Most businesses treat customer reviews as a chore. Reply to the good ones, apologise for the bad ones, move on. The replies are rarely strategic, often generic, and — if you’re using off-the-shelf AI — frequently sound like they were written by the same robot replying to every business in the country.

We built something substantially different. What started as a solution to a simple problem — helping our clients reply to reviews faster without losing their brand voice — evolved into a three-layer AI system that does things no generic tool can do.

Here’s what we built, how it works, and why it matters.

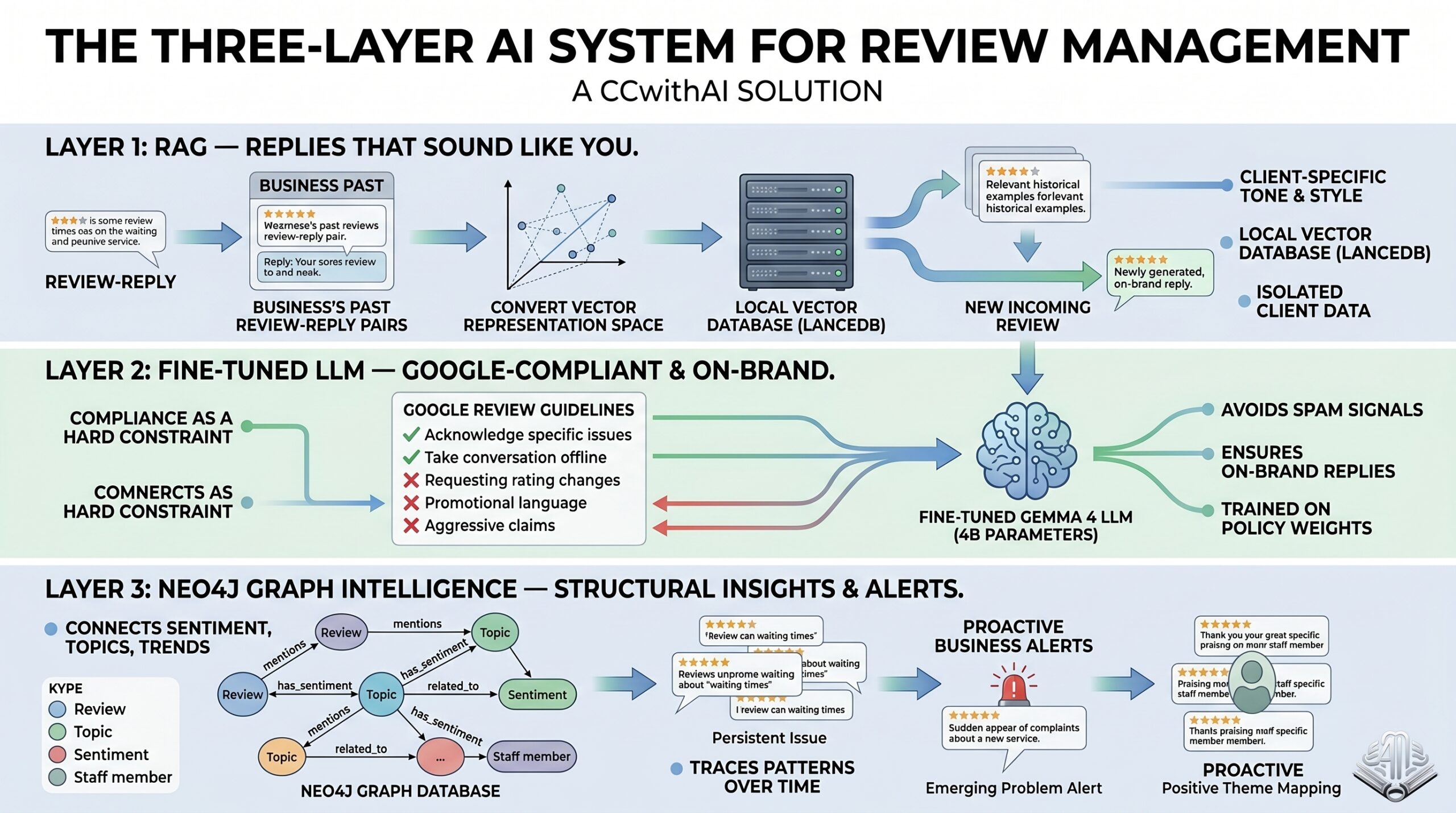

The Three Layers

Layer 1: RAG — Replies That Sound Like You

The foundation of the system is Retrieval-Augmented Generation (RAG). The core idea: instead of asking AI to “write a professional reply”, the system learns from your own past replies and uses them as the template for everything it generates.

When you onboard a new client, you feed the system a batch of their historical reviews alongside the actual replies they sent. Each review-reply pair gets converted into a vector embedding — a mathematical representation of its meaning and tone — and stored in a local vector database (LanceDB).

When a new review comes in, the system finds the most similar past reviews from that client’s history and retrieves how they actually replied at the time. These become live examples — few-shot context that tells the AI: “here’s a complaint about a delayed job, and here’s exactly how this client handled it before.” The generated reply follows the same structure, tone, warmth, and register.

The result is a response that sounds like it came from the same person who’s always managed this client’s reviews — because the AI is literally modelling that person’s writing style from real examples.

Data isolation is strict. Each client’s history is completely separated. A bathroom fitter’s tone doesn’t bleed into a car dealership’s replies. Each profile also holds persistent global instructions — things like “always sign off with ‘The GS Bathroom Team’” or “never mention prices in a public reply” — that are baked into every generation.

Layer 2: A Fine-Tuned LLM Trained on Google’s Review Guidelines

Here’s where it gets more interesting — and where this system diverges from anything you’d get from a generic AI tool.

We fine-tuned a 4-billion parameter language model (based on Gemma 4) specifically on Google’s guidelines for what can and cannot be included in a review reply.

Why does this matter? Because Google has a clear set of rules around review responses, and violating them — even unintentionally — can get your reply removed, flag your listing, or damage your ranking. Generic AI doesn’t know these rules in any reliable way. It will sometimes get them right, sometimes get them wrong, and it has no way to tell the difference.

Our fine-tuned model has been trained to treat compliance as a hard constraint, not a soft guideline. It has learned:

- What’s not allowed: asking reviewers to change their rating in a reply, disclosing personal information about the customer, using promotional language like discount offers, aggressive counter-claims, or anything that could be flagged as harassment

- What’s strongly discouraged: overly templated language that triggers spam signals, replies that don’t address the specific review, keyword-stuffing in replies

- What works: acknowledging the specific issue raised, keeping negative replies brief and taking the conversation offline, thanking reviewers by first name when possible, responses that match the emotional register of the review

The practical result: every reply the system generates is not just on-brand — it’s compliant. You’re not gambling on whether the AI happens to know Google’s policies this week. Compliance is baked into the model weights.

This is particularly valuable for agencies managing review responses across multiple clients, where one badly-worded AI reply could create a problem that takes weeks to resolve.

Layer 3: Neo4j Graph Intelligence — Turning Reviews Into Business Insight

The third layer is where the system moves beyond reply generation and starts doing something genuinely new.

Alongside the vector database, all reviews and their metadata — sentiment, topic, date, location (where relevant), recurring themes — are stored in a Neo4j graph database. In a graph database, data isn’t just stored in rows and tables. It’s stored as a web of relationships. Entities connect to each other: a review connects to a topic, a topic connects to a time period, a time period connects to a pattern, a pattern connects to an alert.

This structure lets the system do something a standard vector search can’t: it can trace patterns across reviews over time and surface insights about the business itself.

Some of what this enables:

Persistent issue detection. If a plumbing business has received 11 reviews over six months that mention waiting times, the graph will surface this as a persistent theme — even if individual reviews used different language (“took ages”, “had to wait weeks”, “appointment kept being pushed back”). A vector search finds similar reviews. A graph finds relationships between them and tells you how long this has been a problem.

Emerging problem alerts. When a new complaint topic appears more than twice in a short window — a new staff member generating friction, a supplier change affecting product quality, a seasonal service issue — the graph spots it as a cluster and flags it before it becomes a pattern. You find out about it from your own data before it shows up as a dip in your star rating.

Positive theme mapping. The same logic applies to praise. If customers keep mentioning a specific team member by name, a particular part of the service, or a detail of the experience, the graph maps these as strengths. This feeds back into how the business operates — and how it markets itself.

Relationship context for generation. When the RAG layer retrieves similar past reviews, the graph layer adds context: “this is the third complaint about this issue this quarter.” The reply can acknowledge the pattern appropriately, rather than treating each review as an isolated event.

The cumulative effect is that a business using this system isn’t just managing reviews more efficiently — it’s generating a continuous stream of structured business intelligence from what is normally an unstructured, ignored data source.

Why Local-First

The entire system runs on your machine using local LLM inference via LM Studio. Nothing is sent to an external API. No review text, no customer names, no complaint details leave your premises.

This matters more than it might seem. Customer reviews often contain sensitive specifics — service dates, personal complaints, staff names, location details. The moment that data enters a third-party API, you’ve lost control of it. Running locally means the privacy boundary stays exactly where it should: with you.

It also means no per-query API costs, no rate limits on generation, and no dependency on an internet connection to produce replies.

What Day-to-Day Use Looks Like

For a business receiving 20–40 reviews a week, the workflow is simple:

- Open the interface, select the client

- Paste the new review

- Receive a ready-to-use reply in a few seconds

- Review, optionally edit, post

What would previously take 90 minutes of thinking, drafting, and quality-checking across multiple platforms takes under 20 minutes — and the output is consistently on-brand and compliant.

For agencies managing review responses across multiple clients, the compound time saving is significant. More importantly, the quality floor is raised: no rushed replies written at 11pm, no generic filler that makes the business look like it doesn’t care, and no accidental policy violations that create more work down the line.

The graph intelligence layer surfaces as a regular report — weekly or monthly, depending on review volume — showing emerging patterns, persistent issues, and positive themes that the business can actually act on.

The Bigger Picture

What we’ve built here is a working example of how a small but well-designed AI system — one trained on the right data, built around the right architecture, and given access to the right relationships — can do something genuinely useful that a generic AI assistant cannot.

The fine-tuned model knows Google’s policies not because we told it to check them, but because that knowledge is part of its weights. The graph database finds patterns not because we wrote rules to look for them, but because relationships between data points emerge naturally and can be queried. The RAG layer matches tone not because we described the brand, but because it learned from the brand’s own history.

Each layer does something the others can’t. Together, they produce a system that turns one of the most time-consuming and undervalued tasks in local business management into a source of both efficiency and insight.

If you’re managing reviews across multiple locations or clients and the process feels like it’s always slipping down the priority list, this is worth a conversation.

Interested?

We’re happy to walk you through a live demo — either for your own business or as a white-label tool for your agency.

Contact us at info@ccwithai.com or visit ccwithai.com.

CCwithAI is a Manchester-based AI automation and application development agency. We build practical AI tools for UK businesses — systems that solve real problems using the right architecture, not the most obvious one.